The LLM SEO & AEO Guide: How to Optimize a Website for AI Search in 2026

The LLM SEO & AEO Guide: How to Optimize a Website for AI Search in 2026

LLM SEO, also called AEO (Answer Engine Optimization) or GEO (Generative Engine Optimization) is the practice of structuring your website so that AI-powered platforms like ChatGPT, Perplexity, Google AI Overviews, and Microsoft Copilot cite, quote, and recommend your brand when users ask questions relevant to what you do.

Traditional SEO gets you ranked in a list. LLM SEO gets you selected as the answer before that list appears.

The numbers make this urgent. Around 93% of AI search sessions end without a website click — yet brands cited inside AI answers earn 35% more organic clicks than those that are not. LLM bots, GPTBot, ClaudeBot, PerplexityBot, now crawl 3.6× more pages than Googlebot. Most websites are not structured for any of this.

This guide breaks down the complete execution system across 9 phases, from research and technical setup to AI citation tracking and quarterly reporting.

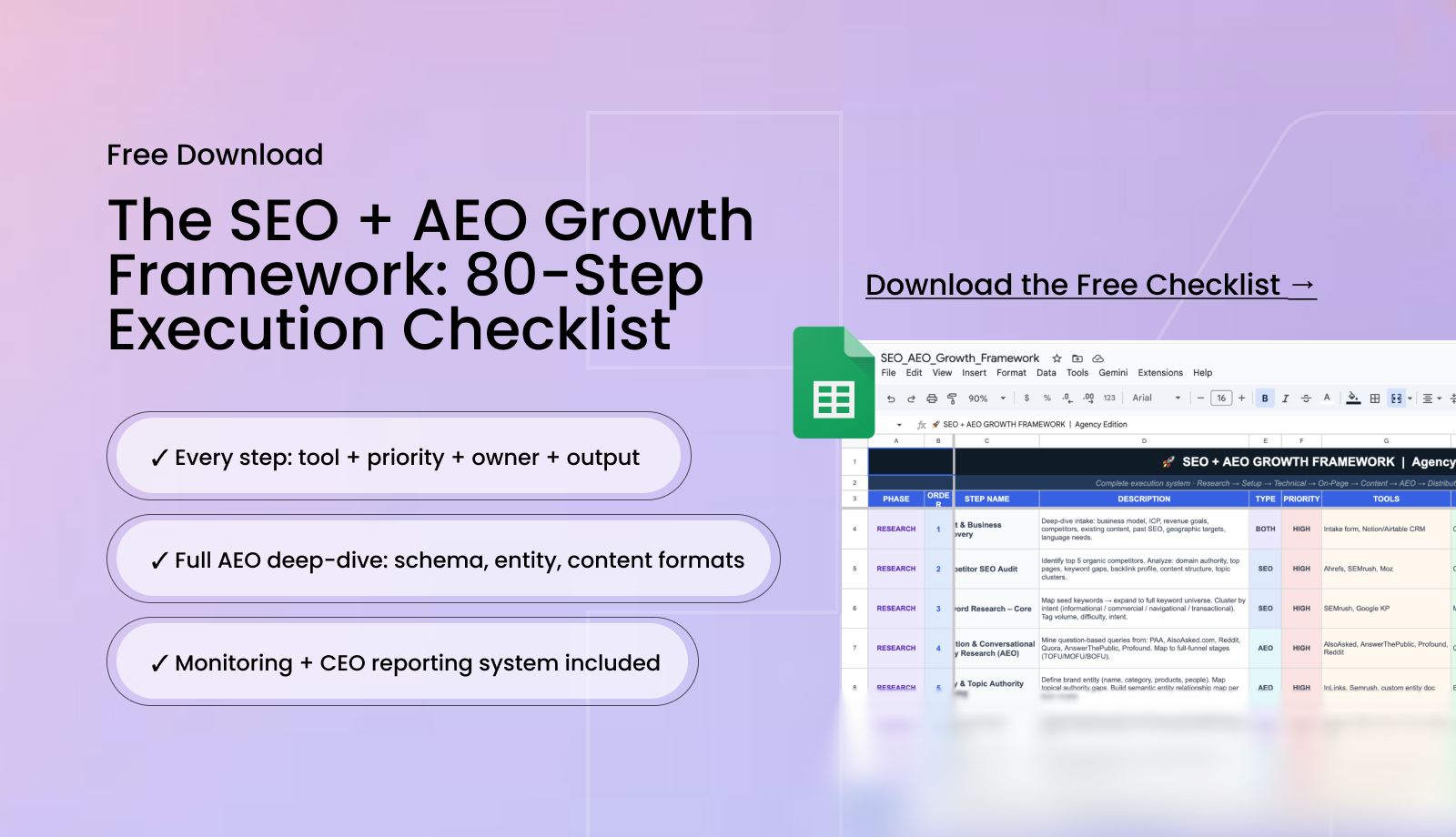

Every step is mapped to the right tool, priority, and output. If you want the full system in a ready-to-use format, you can download the free 80-step SEO + AEO checklist as a Google Sheet and work through it alongside this guide, each phase below maps directly to the checklist.

What you'll learn

- The difference between LLM SEO, AEO, and GEO — and why the method matters more than the label

- How AI engines decide what to cite — and the 3 filters every page must pass

- Phase-by-phase: the complete 9-phase SEO + AEO execution system

- The specific schema types, content formats, and technical steps that produce citations

- How to measure AI visibility — tools, metrics, and what replaces traditional rank tracking

- The most common gaps teams miss — and the signals that prove AEO is working

LLM SEO vs AEO vs GEO - The Only Distinction That Matters

The industry has not settled on a single term yet.

AEO (Answer Engine Optimization) covers all tactics for being cited in AI-generated answers, featured snippets, voice results, AI Overviews, conversational AI.

GEO (Generative Engine Optimization) focuses specifically on synthesised AI responses from ChatGPT, Perplexity, and Claude.

LLM SEO is the tactical implementation layer, the specific structural and content decisions that make your website parseable and quotable by large language models.

For practical purposes, these terms describe the same goal from different angles. You want your website to be the source AI engines cite when someone asks a question your brand should own. The implementation across all three is identical, which is why this guide treats them as one integrated discipline.

The critical mindset shift: unlike search engines, LLMs do not rank your page. They interpret it. There is no page two in an AI response. Content is either cited or skipped entirely. Every tactic in the 9-phase system below is oriented toward passing the three filters AI engines run before selecting a citation.

How AI Engines Decide What to Cite

Before investing in any of the phases below, understand the selection process. AI engines run every page through three sequential filters:

- Can I crawl and read this page? If your robots.txt blocks AI bots, your JavaScript renders content invisibly to crawlers, or your page loads too slowly for a bot to process — the page is invisible regardless of quality. This is the technical layer.

- Does this page clearly answer the question being asked? 44.2% of all LLM citations come from the first 30% of a page's text. If your answer is buried below the fold, it will not be cited. This is the content layer.

- Do I trust this source enough to cite it? Branded web mentions have a 0.664 correlation with AI Overview appearances, significantly higher than backlinks at 0.218. [SOURCE: Ahrefs 2026, cited in SEOmator] This is the authority layer.

The 9 phases below map directly to these three filters, in the order they need to be built.

Phase 1 - Research

Nothing gets built before the research phase is complete. Every strategy decision, which content to create, which schema to prioritise, which queries to optimise for AI, depends on what this phase surfaces. Skipping or rushing research produces a programme that moves fast in the wrong direction.

Client and Business Discovery

Deep-dive intake covering business model, ideal customer profile, revenue goals, competitors, existing content, past SEO performance, geographic targets, and language needs. Output: a client discovery document and SEO/AEO brief that all subsequent work is measured against. Do this once before anything else.

Competitor SEO Audit

Identify the top 5 organic competitors. Analyse domain authority, top-performing pages, keyword gaps, backlink profile, content structure, and topic cluster architecture. Tools: Ahrefs, Semrush, Moz. Output: a competitor analysis report. Repeat quarterly.

Keyword Research — Core

Map seed keywords to a full keyword universe. Cluster by intent: informational, commercial, navigational, transactional. Tag each cluster by search volume, difficulty, and intent. Tools: Ahrefs, Semrush, Google Keyword Planner. Output: a master keyword map. Refresh monthly.

Question and Conversational Query Research (AEO)

This step is where AEO strategy begins. Mine question-based queries from People Also Ask, AlsoAsked.com, Reddit, Quora, AnswerThePublic, and Profound.

Map questions to full-funnel stages: TOFU (what is / how does), MOFU (X vs Y / best X for use case), BOFU (how to get started / pricing). Output: a question cluster map and FAQ bank. This becomes the foundation for all AEO content.

Entity and Topic Authority Mapping

Define your brand entity: name, category, products, key people. Map topical authority gaps — which topic areas does your website have no coverage of that competitors rank in? Build a semantic entity relationship map per topic cluster. Output: entity map and topical authority gaps document.

Search Intent Deep Analysis

Classify all target keywords by intent type. Analyse SERP features triggered for each query: featured snippets, People Also Ask, AI Overviews. Identify zero-click risk — queries where AI Overviews are likely to absorb the click before it reaches your result. Output: intent classification matrix.

AI Search Landscape Analysis

This step is missing from most SEO programmes — and it is one of the highest-value inputs in the entire research phase. Test how your target queries actually appear in Google AI Overviews, Perplexity, ChatGPT search, and Bing Copilot right now.

Who is cited? In what format?

What gaps exist that your content could fill? Tools: Profound, Otterly.ai, manual testing. Output: AI search gap report. Repeat monthly.

Content Audit of Existing Assets

Audit all existing URLs for indexation status, traffic, rankings, links, schema coverage, and intent match. Tag each URL: keep / optimise / merge / delete. Tools: Screaming Frog, Google Search Console, Ahrefs. Output: content audit spreadsheet with action tags.

Phase 2 - Setup

The setup phase creates the technical and measurement foundation that makes everything else trackable. Without this layer, you cannot prove that your SEO and AEO work is producing results, and you cannot catch problems early enough to fix them.

Analytics Stack Setup

Install and verify: GA4, Google Search Console, Google Tag Manager. Set up goals and conversions. Connect GSC to GA4. Verify that data flows correctly between all three. Configure channel groupings so organic, direct, and referral traffic are correctly segmented. Output: verified analytics stack with working conversion tracking.

AI Traffic Tracking Setup

This is the AEO-specific measurement layer most teams skip. Enable Generative AI traffic source tracking in GA4 by creating custom channel groupings that capture sessions from chat.openai.com, perplexity.ai, gemini.google.com, and similar AI referral sources. Set up Profound or Otterly.ai for AI mention monitoring. Output: AI traffic dashboard live and measuring from day one.

Sitemap Configuration

Generate an XML sitemap that includes all indexable URLs. Exclude: staging environments, thank-you pages, admin pages, duplicate URL patterns. Submit to Google Search Console. Set auto-update if your CMS supports it. Output: live sitemap submitted to GSC.

Robots.txt Configuration

Audit your robots.txt for two separate objectives. First, ensure the right pages are blocked from crawling (staging, admin, duplicate URL patterns). Second, and this is critical for AEO ensure AI crawlers are explicitly allowed for your indexed content.

The bots to allow: ChatGPT-User and OAI-SearchBot (ChatGPT live search retrieval), PerplexityBot, ClaudeBot. Note: GPTBot and Google-Extended control training data collection and can be managed separately from live search retrieval. Output: optimised robots.txt live.

LLMs.txt Creation

Create a file at yourdomain.com/llms.txt — a Markdown-formatted, plain-text document that gives AI models a curated index of your site's best content. Include: pillar pages, key product and service pages, FAQ pages, About and Team pages, and any original research content. Maximum 100KB, UTF-8 encoded.

Think of it as a sitemap written in plain language for AI engines, not for Googlebot. Update quarterly. Output: live llms.txt at your domain root.

Google Business Profile (if local)

Create or optimise your GBP with consistent NAP (name, address, phone), correct categories, photos, services, and seeded Q&A. Connect to GA4. Set up review monitoring.

For hospitality brands, this is an especially high-priority AEO signal — AI engines use GBP data to build location-specific entity knowledge. Output: fully optimised GBP live.

Brand Entity Documentation

Write a consistent brand entity block: company name, category, products/services, founders, mission, founding date.

This exact language, not variations of it, should be deployed on your About page, in your Organization schema, on your LinkedIn company page, in your Google Business Profile description, and on any third-party directories where your brand appears.

If your company is eligible, seed a Wikipedia or Wikidata entry. Output: brand entity block deployed consistently across all web properties.

Phase 3 — Technical Foundation

The technical phase resolves every crawlability, indexation, speed, and structured data issue before content investment begins. Without a clean technical foundation, even perfectly optimised content will not rank or earn AI citations consistently. This phase is done once and maintained monthly.

Core Web Vitals Audit and Fix

Run Google PageSpeed Insights and Lighthouse on your key pages. Measure LCP (Largest Contentful Paint), CLS (Cumulative Layout Shift), and INP (Interaction to Next Paint, the newest and most commonly failing metric).

Fix: compress all images to WebP or AVIF format under 200KB, defer non-critical JavaScript, eliminate layout shift caused by fonts and embeds, and improve server response time. Target: all Core Web Vitals in the green range. Check monthly.

Crawlability and Indexation Audit

Crawl the full site with Screaming Frog. Fix: broken links (4xx errors), redirect chains longer than one hop, orphan pages with no internal links pointing to them, and resources blocked from crawling that should be accessible. Verify the GSC Coverage report shows no unexpected excluded pages. Output: zero crawl errors report.

HTTPS and Security

Verify full HTTPS implementation with no mixed content warnings. Confirm the SSL certificate is valid and not expiring within 60 days. Fix any security headers flagged by a browser developer tools audit. Output: 100% HTTPS with no mixed content.

URL Structure Optimisation

Enforce: all URLs lowercase, hyphens as word separators, descriptive slugs that reflect the page's primary keyword, logical folder structure that reflects content hierarchy.

Remove dynamic URL parameters where possible. Implement canonical URLs. Set up 301 redirects for any changed URLs. Output: clean URL architecture with no dynamic parameter duplication.

Canonical Tags and Redirect Map

Deploy canonical tags on all pages to handle pagination, URL parameter variations, regional and language variants, and near-duplicate pages. Separately, build a complete redirect map for all URL changes, deleted pages, and any site migration work.

Implement in your CMS and test every redirect. Avoid redirect chains, maximum one hop. Output: zero duplicate content issues, complete redirect map live.

Internal Link Architecture Audit

Map your internal linking structure using Screaming Frog or Ahrefs. Ensure: pillar pages receive the highest volume of internal links from cluster articles, all orphan pages are eliminated, and anchor text is descriptive and keyword-relevant (never "click here" or "read more"). Internal links are how you direct both crawl priority and topical authority signals toward your most important pages. Output: internal link map with fix list.

Technical Completions

Additional technical steps at this phase: hreflang tags for multilingual sites, JavaScript SEO audit to ensure critical content is not JS-only and invisible to crawlers, structured data baseline deployment (covered in detail in Phase 6), HowTo and Article schema on all relevant content, crawl budget optimisation for large sites, and a branded custom 404 page that returns the correct 404 status code. Each of these is a one-time implementation with monthly monitoring.

Phase 4 — On-Page Optimisation

On-page optimisation ensures that every page communicates its primary topic clearly to both human readers and machine crawlers. This phase is done once across the full site and maintained as new content is published.

On-Page Checklist

The on-page phase covers eight distinct tasks that must be completed on every key page:

- Meta titles: Unique per page, 50–60 characters, primary keyword first, brand suffix. Different from the H1.

- Meta descriptions: 150–160 characters, answer-oriented, include a soft CTA. These are marketing copy — they sell the click in traditional search and signal content scope to AI engines.

- H1–H6 heading hierarchy: One H1 per page containing the primary keyword. H2 for main sections. H3 for subsections. No skipped levels. No headings used purely for visual styling.

- Semantic HTML tagging: Implement HTML5 semantic elements — <article>, <section>, <header>, <main>, <footer>. This is critical for AI content parsing — AI engines use semantic structure to understand content hierarchy.

- Image alt text: Descriptive alt text on all non-decorative images. Under 125 characters. No "image of" prefix. Contextually relevant to the surrounding content.

- Open Graph and social meta tags: OG title, description, and image (1200×630px) on all pages. Twitter Card tags. Social previews should match page intent.

- Breadcrumb navigation: Implement breadcrumbs with BreadcrumbList schema. Deploy site-wide. Verify in GSC Rich Results.

- Keyword density and NLP optimisation: Target keyword and semantic variants appear naturally in H1, first 100 words, H2 headings, and body. Use SurferSEO or Clearscope to score content against NLP benchmarks.

Phase 5 — Content Architecture

The content phase builds the pillar-cluster architecture that creates compounding topical authority, the structural signal AI engines use to identify websites that comprehensively cover a topic rather than containing isolated pages that happen to mention the right keywords.

Pillar Page Architecture

Build one pillar page per core topic cluster: 3,000–5,000 words, comprehensive coverage of the topic, links to all spoke articles, at least one BOFU conversion CTA.

Pillar pages must be optimised for both traditional SEO (ranking for broad head terms) and AEO (structured with direct-answer openings, FAQ sections, and entity clarity). One pillar page per cluster, built once, updated quarterly.

Topic Cluster and Spoke Content

Produce spoke articles for each pillar, each targeting a specific subtopic or long-tail keyword within the cluster. Every spoke links back to the pillar. Length: 1,000–2,500 words. Each piece is mapped to a funnel stage (TOFU / MOFU / BOFU) and to a specific question from the question cluster map built in Phase 1. This is the monthly content engine of the programme.

AEO Content Writing Format

Every piece of content produced in this phase must follow the AEO writing format. This is not optional, it is the structural rule that determines whether content earns AI citations or not:

- Direct-answer intro: Answer in the first sentence. No preamble, no context-first. The answer comes before the background.

- Definition + use case: For any concept: what is X (2–3 sentences) → immediately followed by a real-world use case example. This format is portable, AI engines can extract it independently of surrounding content.

- Step-by-step H3 structure: For process content: numbered H3 steps, each complete and self-explanatory with 2–4 sentences of explanation. AI engines extract sequential content from this format reliably.

- FAQ block at end: Self-contained answers, 40–60 words each. Readable in isolation with no pronouns referencing other answers.

FAQ Architecture and FAQ Banks

Build a dedicated FAQ section on every pillar page, service page, and blog post. Each FAQ answer must be self-contained, readable in isolation, 40–60 words, no reference to "the above method" or "as mentioned earlier." Source questions from: People Also Ask for your target queries, AlsoAsked.com question trees, sales team call notes, Profound AI query data. Each FAQ section needs FAQPage schema implemented. Expand the FAQ bank monthly as new questions emerge.

BOFU Conversion Content

Create the content that converts AI-referred traffic into enquiries and leads: competitor comparison pages (Your Brand vs X), use-case landing pages by industry or company type, a pricing page with embedded FAQs.

BOFU pages generate the highest AI referral traffic engagement, users arriving from AI citations at the evaluation stage convert at significantly higher rates than informational traffic. Build one BOFU page per core product or service.

Definition Content, Content Refresh, Original Research, Lead Magnets

Four additional content investments that compound over time: definition and glossary pages for core industry terms (pure AEO content optimised for "what is X" AI queries); a quarterly content refresh calendar to update statistics, FAQ sections, and schema on all published content; at least one piece of original research or proprietary data per quarter (the single highest-authority AEO signal, other sites cite your data, AI engines see high-authority citations pointing to you); and one downloadable lead magnet per content cluster to convert organic and AI-referred traffic into email subscribers.

Phase 6 — AEO Deep-Dive

Phase 6 is where the AEO-specific work lives, the layer built on top of technical SEO and content architecture that determines whether your pages actually earn AI citations. Every step here directly addresses one of the three citation filters described at the start of this guide.

Full Schema Audit and Gap Fill

Audit every live page for schema coverage. The types required for a fully AEO-ready website:

- Organization — homepage and About page: name, URL, logo, description, sameAs array (all social profiles and web properties)

- WebPage — every page

- BlogPosting / Article — every blog post and guide: headline, author with Person schema, datePublished, dateModified

- FAQPage — every page with Q&A content: questions and answers must exactly match on-page text

- HowTo — all process and tutorial content: step array with name and text per step

- BreadcrumbList — site-wide

- Product — product and pricing pages

- DefinedTerm — glossary and definition pages

All schema implemented in JSON-LD format. Validate every implementation with Google's Rich Results Test before marking complete.

Conversational Query Optimisation

Rewrite all informational pages to answer conversational queries directly. Test each key URL manually: open ChatGPT and Perplexity, ask "What is [X]?", "How do I [Y]?", "Best [Z] for [use case]?", and check whether your page is cited. If it is not, identify what the cited page has that yours does not: a clearer direct-answer opening? A FAQ section? More specific entity language? Fix those gaps. This is the core AEO iteration loop, run monthly.

Featured Snippet Optimisation

Identify queries where your pages rank in positions 1–10 but hold no featured snippet. For each: rewrite the answer block immediately after the relevant H2 heading, a direct 40–50 word answer in paragraph format, followed by a numbered or bulleted list if the query is a process or comparison.

This format most reliably captures featured snippets and serves as the primary content AI Overviews extract. Tools: Ahrefs, Semrush, GSC.

AI Overview and SGE Optimisation

Analyse which queries in your keyword set trigger Google AI Overviews. For each query where you are not currently cited: audit the cited pages for structural differences, are they using direct-answer openings your pages lack? Are their entity signals clearer? Do they have FAQPage schema where you do not? Optimise for the specific gap, not generically. Track progress monthly using Profound and manual testing.

Entity Authority Building

Your brand entity must be represented consistently across every platform where it appears. Run an entity consistency audit: search your brand name across Google, ChatGPT, and Perplexity and note exactly how each platform describes you.

Then compare that to your website homepage, About page, Google Business Profile, LinkedIn company page, Crunchbase, and any industry directories. Any inconsistency in name, category, or description is an entity signal that needs standardising. Repeat quarterly.

E-E-A-T Signal Implementation

Google's AI Overviews weight E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals heavily when selecting citations. Deploy across all content: author bios with specific credentials on every article (not generic "the team" attributions), expert contributor bylines where relevant, inline citations and source links for all statistics, a team page with individual expertise signals, and an About page with company history and credentials. Each author needs a Person schema-marked author page with name, job title, and URL.

Voice Search and Zero-Click Optimisation

Identify queries in your keyword set that are likely to be answered via voice (question-format queries, local queries, how-to queries). For these specifically: format answers to be short (29 words on average for voice results), conversational in tone, and direct. Add Speakable schema to mark the sections of your pages that contain the best voice answer candidates — this signals to Google Assistant and voice interfaces exactly which content to surface.

Citation Tracking, Question Cluster Mapping, Reddit/Quora, LLMs.txt Maintenance

Four ongoing AEO activities that run monthly and quarterly: manual citation testing (test 20+ key queries across ChatGPT, Perplexity, Google SGE, and Bing Copilot monthly, log whether you are cited, your position, and whether the description of your brand is accurate); full-funnel question cluster mapping (ensure every content piece is mapped to a TOFU, MOFU, or BOFU question); authentic Reddit and Quora presence (Perplexity heavily mines Reddit, 2–4 genuine answers per week in relevant threads builds both AEO authority and referral traffic); and quarterly LLMs.txt updates to add new high-value pages and remove outdated ones.

Phase 7 — Distribution

Distribution amplifies the authority signals that AI engines use to trust and cite your content. On-site optimisation builds the foundation. Off-site distribution builds the entity authority that makes AI engines confident enough to recommend you.

Digital PR and Link Building

Build backlinks from industry publications, expert guest posts, podcast appearances, original research citations, and journalist requests (HARO and equivalent). Target domain authority 40+ publications. This builds both traditional SEO authority (backlinks) and AEO authority (brand mentions in trusted external sources). Track monthly link acquisition velocity against competitors. Output: monthly link building targets and outreach tracker.

Guest Blog Publishing

Publish 2–4 guest articles per month on relevant industry blogs. Each must cite your website, include branded anchor text, and demonstrate genuine expertise, not thin content published for the link alone. These build backlinks and entity authority simultaneously: an article on a respected industry publication that mentions your brand name alongside your category is one of the strongest entity signals available.

Podcast Appearances, Social Pipeline, Email Newsletter, Content Syndication

Four distribution channels that compound over time: podcast and video appearances (show notes provide backlinks and brand mentions that AI engines use for entity validation); a social content pipeline that drives traffic from LinkedIn, X, and Instagram back to pillar and cluster content; a monthly email newsletter featuring best blog posts and new FAQ content; and selective content syndication on Medium and LinkedIn Articles with canonical tags back to the original. Never syndicate without a canonical tag, without it, you create duplicate content that splits your ranking authority.

Phase 8 — Monitoring

The monitoring phase makes the programme measurable and self-correcting. Without these systems, you cannot tell whether AEO work is producing citations, whether content is gaining or losing ground, or whether AI Overviews are cannibalising your organic traffic.

Weekly Pulse Report

Track 5 metrics weekly: organic sessions, keyword rankings for the top 20 target queries, conversions from organic, crawl errors flagged in GSC, and AI traffic sessions. Brief commentary on anything that changed more than 10% week-over-week. Keep this to five numbers, the purpose is to flag problems early, not to produce a comprehensive analysis weekly.

Monthly Content Performance Review

Review: cluster-level authority growth (are pillar pages gaining ranking authority across the cluster's keywords?), new AI citation appearances (from Profound and manual testing), content gaining and losing position, top organic landing pages, and CTR changes by query type. One insight per review about what the data suggests for next month's content priorities.

AI Citation Monitoring

Monthly AI citation report covering: total citations across ChatGPT, Perplexity, Google SGE, and Bing Copilot; AI share of voice versus your top 3 competitors; accuracy of how AI engines describe your brand (are descriptions positive, neutral, or inaccurate?); and any new citation appearances for queries where you were not previously cited. Tools: Profound for automated tracking, supplemented by manual testing of 20+ key queries. This is the primary AEO performance metric.

Rankings, Backlinks, GSC Health, Branded Search, CTR Segmentation, Attribution

Six additional monitoring streams: keyword rankings tracked weekly in Ahrefs or Semrush; monthly backlink audit covering new links gained, links lost, and toxic links to disavow; monthly GSC coverage and Core Web Vitals check; branded search volume tracking monthly in GSC (rising branded search volume is a direct proxy signal for AEO working, users who heard about you from an AI citation search for you by name); CTR segmentation by query type (if informational CTR drops while rankings hold, AI Overviews are answering without clicks, this is a diagnostic signal that triggers an AEO response); and multi-touch organic attribution mapping organic's contribution to pipeline and revenue for CEO-level reporting.

Phase 9 — Optimisation

The optimisation phase runs quarterly and monthly to ensure the programme compounds rather than plateaus. The strategies that produced results at month three are not necessarily the highest-leverage investments at month nine, this phase keeps priorities aligned with where the real opportunities sit.

Quarterly SEO Strategy Review

Full strategic review every quarter: where have keyword opportunities shifted, how have competitors moved, what algorithm updates have affected performance, where are the remaining content gaps, and what new AEO opportunities have emerged that were not on the roadmap three months ago. Reset priorities based on data, not inertia. Output: quarterly strategy update document that replaces the previous quarter's priorities.

Content Decay Prevention

Monthly: identify posts with traffic declining more than 20% month-over-month. For each: triage the cause and apply the appropriate fix, update statistics and data, add new FAQ questions sourced from recent search queries, refresh schema, add internal links from newer content, and submit for re-indexing in GSC. Content decay is silent and cumulative, catching it monthly prevents compounding losses.

Schema Refresh, AEO Content Scoring, Meta A/B Testing, FAQ Expansion, Link Velocity, CEO Report

Six quarterly and monthly optimisation tasks: verify all deployed schema is still 100% accurate to page content (broken or inaccurate schema is an authority loss signal); score all content against the AEO checklist and prioritise rewrites by traffic × AEO gap; A/B test meta titles and descriptions on high-impression pages every 60–90 days; expand the FAQ bank monthly with new questions from Profound data, GSC search terms, and sales call inputs; review link acquisition velocity against competitors quarterly; and produce a three-tier CEO report quarterly covering organic pipeline contribution, AI citation growth, topic authority progress, and the forward investment plan.

Before and After: The Same Website, Two Different Outcomes

Before (no LLM SEO):

A hospitality SaaS company's homepage opens with: "We are transforming the guest experience through innovative technology solutions built for the modern hospitality operator."

The robots.txt blocks PerplexityBot. There is no schema. The blog posts all open with three paragraphs of industry context before getting to the point. When a potential client asks ChatGPT "best CRM for hospitality brands with multiple locations," this company is not mentioned. Their competitors with weaker products but better-structured websites are cited instead.

After (9-phase system applied):

The same homepage now opens with: "Venue IQ is a CRM and marketing automation platform for hospitality operators managing two or more locations, connecting booking data, guest profiles, and campaign performance in one dashboard." Organization schema marks the entity.

The robots.txt allows all AI search crawlers. The blog opens every article with a direct-answer sentence. FAQPage schema is live on every post. The brand entity is identical across the website, Google Business Profile, LinkedIn, and Crunchbase.

The llms.txt file guides AI crawlers to the 25 most valuable pages. ChatGPT now surfaces this company by name when a buyer asks about hospitality CRM tools, and branded search volume has grown 34% in the three months since implementation began.

.png)

For hospitality brands and tech companies that want this system built and managed rather than implemented alone, our team at Mad Magnet runs the full SEO and AEO programme as a connected system, brand, digital, and growth designed together from day one.

Start with a conversation here.

Frequently Asked Questions

What is LLM SEO and how does it differ from traditional SEO?

LLM SEO is the practice of optimising a website to be cited by AI-powered answer engines, ChatGPT, Perplexity, Google AI Overviews, and Copilot. Traditional SEO optimises pages to rank in a list of links. LLM SEO optimises content to be selected as the direct answer before that list appears. The technical foundations are shared, crawlability, site speed, structured data. The difference is in content structure (direct-answer format, FAQ blocks, HowTo schema) and authority signals (brand mentions now outperform backlinks for AI citation rates).

What is the difference between AEO, GEO, and LLM SEO?

AEO (Answer Engine Optimization) is the broadest term, covering all tactics for being cited in AI-generated answers including featured snippets, voice results, and AI Overviews. GEO (Generative Engine Optimization) focuses specifically on synthesised AI responses from ChatGPT, Claude, and Perplexity. LLM SEO is the tactical implementation layer, the specific structural, technical, and content changes that make a website machine-readable and citable. In practice, these terms describe the same work and the implementation overlaps completely.

What schema markup is most important for LLM SEO?

The schema types with the highest direct impact on AI citation rates are FAQPage (signals extractable answers are present), Organization (establishes brand entity in the AI knowledge graph), Article and BlogPosting with dateModified (signals content type and freshness), HowTo (for process content, triggers rich results for process queries), and Person schema on author pages (supports E-E-A-T signals). All schema should be implemented in JSON-LD format and validated with Google's Rich Results Test before publishing.

What is llms.txt and does every website need one?

llms.txt is a plain-text Markdown file placed at your domain root that gives AI crawlers a curated, human-readable overview of your site, your key pages, content categories, and primary entity information. Unlike sitemap.xml (which lists URLs for traditional indexing), llms.txt is written specifically to help AI engines navigate your information architecture and understand your brand's scope. It is a lightweight implementation (under an hour for a developer) and is particularly valuable for sites with large content libraries. Any website that wants to improve AI citation rates should have one.

How do I track whether my content is being cited by AI engines?

The most direct method is a dedicated AI visibility tracking tool. Profound monitors brand and page citations across ChatGPT, Google AI Overviews, Perplexity, and Bing Copilot in one dashboard. Semrush AI Toolkit tracks AI Overview appearances. Otterly.ai provides competitor comparison data. For proxy metrics: monitor branded search volume in Google Search Console (rising branded search volume is a reliable indicator of AI-driven awareness), set up a custom GA4 channel group capturing traffic from chat.openai.com and perplexity.ai, and track your featured snippet capture rate as a leading indicator of Google AI Overview inclusion.

How long does it take to see results from LLM SEO?

Technical changes, schema implementation, llms.txt deployment, robots.txt updates, typically begin influencing AI citation rates within 4–8 weeks on queries where the page already has domain authority. Content structure changes (rewriting openings to lead with direct answers, adding FAQ blocks) can produce measurable results within 2–4 weeks. Off-site authority signals, brand mentions, entity consistency, digital PR, compound over 3–6 months. Teams starting from a low-authority baseline should plan for a 3–6 month window before consistently increased citation rates are measurable across a target query set.

Is there a risk that optimising for AI search will hurt traditional SEO performance?

No, the optimisations that improve AI citation rates also improve traditional SEO performance. Direct-answer content openings improve featured snippet capture rates. FAQ sections improve People Also Ask appearances. Schema markup improves rich results in traditional search. Content freshness (tracked via dateModified schema) is a positive signal in both traditional and AI search. The 9-phase system in this guide treats SEO and AEO as one integrated programme, the tactics are complementary, not competing.

What is the most commonly missed step in LLM SEO implementation?

The AI Search Landscape Analysis (Phase 1), manually testing how your target queries appear in ChatGPT, Perplexity, and Google AI Overviews before building any strategy. Most teams skip this and optimise blindly, without knowing which queries already trigger AI Overviews, which competitors are currently cited, or what format the cited content uses. Running this audit first takes one to two hours and changes every prioritisation decision that follows. The second most commonly missed step is setting up AI traffic tracking in GA4 (Phase 2), without it, there is no way to measure whether the programme is producing AI-referred traffic and conversions.

.png)

.png)